For reasons I’ve never understood, AWS’s S3 object file store does not offer metadata about the size and number of objects in a bucket. This meant that answering the simple question “How can I get the total size of an S3 bucket?” required a scan of the bucket to count the objects and total the size. This is slow, especially when you have millions of objects in a bucket.

In July, 2015, AWS started collecting S3 metrics in CloudWatch. Metrics include the storage type and number of objects and are available in the CloudWatch console. I wanted some way to programmatically access this data and to produce output from that script that could be input to additional scripts for further analysis. Retrieving CloudWatch data is, of course, orders of magnitude faster than counting the objects in the bucket so that was a major impetus to writing something as well.

What I came up with is below. It’s written as a PowerShell advanced function so that you can dot-source it and use it as you would any other cmdlet. I’ve written extensive help into the function which can be accessed via Get-Help Get-S3BucketSize -Full after you’ve loaded the function. Three items of note: the -BucketName parameter can be passed via the pipeline and the output is a standard PSObject, meaning you can pipe the results to Out-GridView, Measure-Object or even Export-Csv. Size output is reported in gibibytes (230), not gigabytes (109).

There’re lots of options and nuance regarding the CloudWatch S3 metrics which I have tried to handle in the optional parameters and in the help information. But if you have a default AWS credential profile and region stored, you can just issue Get-S3BucketSize and you should get something useful. If you have specific questions about the results or parameters, please post in the comments below and I’ll try to answer the question.

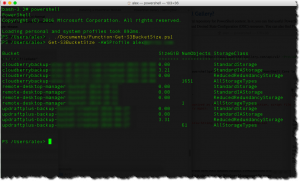

Now, for the best news of all. While I was writing this script, Microsoft open-sourced PowerShell (!!) and AWS announced support for the alpha (!!!). I have no words for how monumental this is…but maybe a screenshot will suffice. AFAIK, this screen shot may be of one of the very first PowerShell functions to do anything useful in AWS via PowerShell on macOS (this was actually on the macOS Sierra beta). Anyway, I am really excited by all this interoperability — and AWS’s quick support for it in a major way.

Import-Module AWSPowerShell

<#

.SYNOPSIS

Provides maximum or highest average bucket size in gibibytes and number of objects via AWS CloudWatch measurements for a specific S3 bucket or all buckets over a specificed period of days.

.DESCRIPTION

Accepts a single bucket name or an array of bucket names via the pipline to pass to AWS CloudWatch to retrieve metrics for all (default) or selected storage classes.

.PARAMETER BucketName

Lower-case name of S3 bucket.

.PARAMETER StorageClass

One of StandardStorage | StandardIAStorage | ReducedRedundancyStorage. Defaults to all storage classes. Results for storage class AllStorageTypes are always returned in order to provide the number of objects.

.PARAMETER AWSProfile

String containing name of credentals created via New-AWSCredential. Defaults to credentials stored in default AWS profile, that is whatever is authorized when no credentials are supplied.

.PARAMETER Days

The number of days for which to collect average or maximum CloudWatch metrics for S3 buckets. Defaults to 5.

.PARAMETER Statistic

The case-sensitive CloudWatch statistic to retireve. Must be one of 'Maximum' or 'Average'. Defaults to 'Average'. 'Average' returns highest average over the number of days selected.

.INPUTS

System.String

.OUTPUTS

System.Management.Automation.PSObject:

Bucket = Name of S3 Bucket

SizeGiB = Size in gibibytes of contents of bucket by storage class

NumObjects = Number of S3 objects in bucket across ALL storage classes

StorageClass = bucket storage class (exclusing GLAICER class)

.EXAMPLE

PS C:\> Get-S3BucketSize

Outputs to the pipline a collection of type PSObject that lists the average bucket size and number of objects in all buckets over the previous five days. Uses the default AWS credential profile.

.EXAMPLE

PS C:\> Get-S3BucketSize -BucketName 'BucketName' -Statistic 'Maximum' -AWSProfile 'myprofile'

Outputs to the pipline a (single member) collection of type PSObject that lists the maximum bucket size and number of objects over the previous five days. Selects buckets based on 'myprofile'.

.EXAMPLE

PS C:\> Get-S3BucketSize -BucketName 'BucketName' -Days 14

Outputs to the pipline a (single member) collection of type PSObject that lists the maximum average bucket size and number of objects over the previous 14 days. Selects buckets based on 'myprofile'.

.EXAMPLE

PS C:\> Get-S3BucketSize | Measure-Object -Property SizeGiB -Sum

Sums the maximum average size over the last five days of all S3 buckets.

.EXAMPLE

PS C:\> Get-S3BucketSize | Measure-Object -Property NumObjects -Sum

Sums the maximum average number of objects over the last five days of all S3 buckets.

.EXAMPLE

PS C:\> Get-S3BucketSize -StorageClass StandardStorage | Measure-Object -Property SizeGiB -Sum

Pipes the maximum average size of StandardStorage over the last five days of all S3 buckets available to the current profile to Measure-Object which sums the total size of all S3 objects in those buckets.

.EXAMPLE

PS C:\> Import-Csv .\lisofbuckets.csv | Get-S3BucketSize

Accepts from pipeline a list of buckets to be retrieved for measurement. The .csv file can be easily created with Get-S3Bucket | Export-Csv .\listofbuckets.csv and edited as required.

.EXAMPLE

PS C:\> Get-S3Bucket | Get-S3BucketSize

Outputs to the pipline a collection of all S3 buckets' size and number of objects. This is equivalent to Get-S3BucketSize since it will also invoke Get-S3Bucket when -BucketName is omitted.

.NOTES

For more information on S3 metrics in CloudWatch, see http://docs.aws.amazon.com/AmazonS3/latest/dev/cloudwatch-monitoring.html

(c) 2016 Air11 Technology LLC -- licensed under the Apache OpenSource 2.0 license, https://opensource.org/licenses/Apache-2.0

Author's blog: https://yobyot.com

#>

function Get-S3BucketSize

{

[CmdletBinding()]

[OutputType([string])]

param

(

[Parameter(ValueFromPipelineByPropertyName = $true,

Position = 0,

HelpMessage = 'Lower-case name of S3 bucket')]

[System.String[]]$BucketName = 'All',

[Parameter(HelpMessage = 'Specify storage class ')]

[System.String]$StorageClass,

[Parameter(HelpMessage = 'Enter the name of the AWS credential profile to be used')]

[System.String]$AWSProfile,

[Parameter(HelpMessage = 'Enter an integer for the number of days to collect metrics')]

[ValidateRange(1, 14)]

[System.Int16]$Days = 5,

[System.String]$Statistic = 'Average'

)

begin

{

try

{

$obj = [ordered]@{

'Bucket' = ''

'SizeGiB' = ''

'NumObjects' = ''

'StorageClass' = ''

}

$results = @()

$daysAgo = (Get-Date ([datetime](Get-Date).AddDays(- $Days)) -Format s) # Date formats for Get-CWMetricStatistics MUST be in ISO format

$today = Get-Date -Format s # Date formats for Get-CWMetricStatistics MUST be in ISO format

if ($AWSProfile) { Set-AWSCredentials -ProfileName $AWSProfile }

if ($Statistic -cnotmatch '(Maximum|Average)\b') { $Statistic = "Average" }

Write-Verbose "Today=$today, DaysAgo=$daysAgo, AWSProfile=$AWSProfile, Statistic=$Statistic"

}

catch

{

"An error occurred: $Error"

}

}

process

{

try

{

switch ($BucketName)

{

'All' {

$BucketNameStrings = Get-S3Bucket | Select-Object -ExpandProperty BucketName

foreach ($b in $BucketNameStrings)

{

switch ("$StorageClass")

{

"StandardStorage" {

$results += (getBucketSize "$b" 'StandardStorage')

}

"StandardIASStorage" {

$results += (getBucketSize "$b" 'StandardIAStorage')

}

"ReducedRedundancyStorage" {

$results += (getBucketSize "$b" 'ReducedRedundancyStorage')

}

default

{

#Get all classes

$results += getBucketSize $b 'StandardStorage'

$results += getBucketSize $b 'StandardIAStorage'

$results += getBucketSize $b 'ReducedRedundancyStorage'

}

}

$results += (getBucketNumObjects $b)

}

}

($BucketName -ne 'All')

{

switch ("$StorageClass")

{

"StandardStorage" {

$results += (getBucketSize $BucketName 'StandardStorage')

}

"StandardIAStorage" {

$results += (getBucketSize $BucketName 'StandardIAStorage')

}

"ReducedRedundancyStorage" {

$results += (getBucketSize $BucketName 'ReducedRedundancyStorage')

}

default

{

#Get all classes

$results += (getBucketSize $BucketName 'StandardStorage')

$results += (getBucketSize $BucketName 'StandardIAStorage')

$results += (getBucketSize $BucketName 'ReducedRedundancyStorage')

}

}

$results += (getBucketNumObjects $BucketName)

}

default { Write-Verbose "Neither 'All' nor individual bucket selected; big problem since default bucket name is 'All' " }

}

}

catch

{

"An error occurred: $Error"

}

}

end

{

try

{

Write-Output $results

Write-Verbose "Done"

}

catch

{

"An error occurred: $Error"

}

}

}

function getBucketSize ($bname, $stgclass)

{

Write-Verbose "getBucketSize entered with $bname and storage class $stgclass"

$metricSize = Get-CWMetricStatistics -Namespace 'AWS/S3' -MetricName 'BucketSizeBytes' `

-Dimension @(@{ Name = 'BucketName'; Value = "$bname" }; @{ Name = 'StorageType'; Value = "$stgclass" }) `

-Statistic $Statistic -Period 86400 -StartTime $daysAgo -EndTime $today

$maxSize = '{0:N2}' -f (($metricSize.Datapoints | Measure-Object -Property $Statistic -Maximum).Maximum / 1GB)

$functionObj = New-Object -TypeName System.Management.Automation.PSObject -Property $obj

$functionObj.Bucket = [string]$bname

$functionObj.SizeGiB = [decimal]$maxSize

$functionObj.NumObjects = ''

$functionObj.StorageClass = $stgclass

$functionObj

}

function getBucketNumObjects ($bname)

{

Write-Verbose "getBucketNumObjects entered with $bname and storage class $stgclass"

$metricNumObjects = Get-CWMetricStatistics -Namespace 'AWS/S3' -MetricName 'NumberOfObjects' `

-Dimension @(@{ Name = 'BucketName'; Value = "$bname" }; @{ Name = 'StorageType'; Value = 'AllStorageTypes' }) `

-Statistic $Statistic -Period 86400 -StartTime $daysAgo -EndTime $today

$numObjects = (($metricNumObjects.Datapoints | Measure-Object -Property $Statistic -Maximum).Maximum)

if (!$numObjects) { $numObjects = 0 }

$functionObj = New-Object -TypeName System.Management.Automation.PSObject -Property $obj

$functionObj.Bucket = [string]$bname

$functionObj.SizeGiB = ''

$functionObj.NumObjects = $numObjects

$functionObj.StorageClass = 'AllStorageTypes'

$functionObj

}

Leave a Reply