Recently, I had the pleasure of being briefed on VMware’s AWS integration as part of Cloud Field Day 5. If you are an enterprise architect with a big investment in VMware, you might find the recordings of the briefings valuable.

Recently, I had the pleasure of being briefed on VMware’s AWS integration as part of Cloud Field Day 5. If you are an enterprise architect with a big investment in VMware, you might find the recordings of the briefings valuable.

As a bit of background, I was at the announcement of VMware on AWS at re:Invent 2016. I remember the audience going wild — as in Jimi Hendrix doing a psychedelic riff in “Hey Joe” level wild — when the presenters told the attendees they could VMotion their virtual machines to the cloud from an on-prem SDDC (or “Software Defined Data Center”).

As a cloud-first guy and virtualization purist from the early VM/370 days, I remember thinking two things. First, there was bound to be a “clash of hypervisors” and, second, putting two walled gardens next to each other creates…two separate walled gardens. How you react to that assertion has a lot to do with your current and past experience. VMware owns today’s enterprise data centers; the cloud owns tomorrow’s enterprise computing footprint.

I left the #CFD5 briefings thinking that VMware’s walls are still up with respect to AWS but, since 2016, port holes have been blasted into the walls. For example, VMware recently gained the ability to use AWS EBS volumes (see “Storage“) on r5.metal EC2 instances. That’s a mega-break in the wall because prior to this VMware needed bare metal i3.metal EC2 instances to provide access to block-attached SSDs for VMware’s file system. With the switch to EBS underway the next question is performance. But, over time, like all performance barriers, this will conquered, too.

But it’s network design that makes it most clear to me that VMware fundamentally perceives AWS as a hardware platform for its hypervisor as opposed to a virtualization platform in its own right. Consider the #CFD5 video in which Aarthi Raju presents the networking interfaces between VMware and AWS. At about 8:10 into it, Adam Post asks Aarthi about the speed at which VMware fails over subnet networking between VMware and the adjacent subnets in connected AWS VPCs. Her response generates a follow-on question from me at about 8:50 about how VMware does this — she responds that today VMware expects to be able to add routes in the main (or default) route table in the VPC for that subnet.

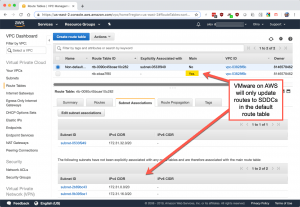

AWS architects will immediately understand the implications of this. Still, a picture is worth a thousand words, so consider the screenshot below of the stock (default) VPC in an AWS account to which I have added a non-default (not “main”) route table and associated it with one of the three subnets in the VPC.

As you know, subnets can be associated with one and only one route table. The purpose of the main route table is provide routing to un-associated subnets. It cannot be deleted and, by default, provides a route for internal traffic for the entire VPC. Route tables are region-wide; subnets are in an availability zone (AZ). AZs are separate data centers. So, the default route table that permits traffic across the entire VPC is actually providing inter-AZ (therefore inter-data center) routing. No wonder people love AWS VPCs — it’s such a powerful concept.

VMware currently does not update that non-default route table with CIDR changes on its side and therefore in a failover situation, the SDDC will not be able to access resources in the subnet routed by a non-default table. That means some complication in network design — nothing major but still something you have to be aware of. VMware says that fixing this is on the roadmap and Aarthi points this out in the video.

This simple example goes to the big question I have about merging enterprise virtualization systems. Enterprise virtualization systems are so complex and provide so many underlying services to the VMs running on them, is it even possible for there to ever be just one garden? Some might argue it isn’t important but I disagree with that assertion. I suggest it’s too expensive, too duplicative — just too much infrastructure — to ask enterprises to live with the endless challenges of integration that two separate systems present. Sure, things like my little example VPC route table will be fixed (and may well have been by the time you read this).

But on the bigger question of merging hypervisors (which solves the core issue, IMO), I just don’t see that happening. Since that rock-star reception AWS and VMware got in 2016, I’ve been wondering who outsmarted whom. AWS got entry into the dominant virtualization system; VMware got a cloud. Two and a half years later, I’m still not sure.

Here’s another (non-technical) oddity about this whole area: AFAIK, VMware on AWS is the only AWS service sold by an outside technology company on the scale of VMware. That says to me that the parties don’t see newcomers to AWS adopting VMware directly and VMware wants to maintain account control. Yet, you could not be in the four hour briefing I was in and come away with the impression VMware is hand-waving at AWS integration. The people who spoke to us were brilliant architects and engineers, the kind of first-tier people you put on the mothership product in a software company. I’m confused by the whole thing. 🙂

My advice: stopping building two different gardens on the hope that one day you’ll be able to completely tear down the wall between them. Consider instead the full Monty: how migrate to the cloud of your choice directly. That means a single enterprise virtualization system, a journey to a destination in which VMware on AWS is an excellent travel companion.

Leave a Reply